Why Does Availability Alone Fail CDN Routing Decisions?

Table of contents

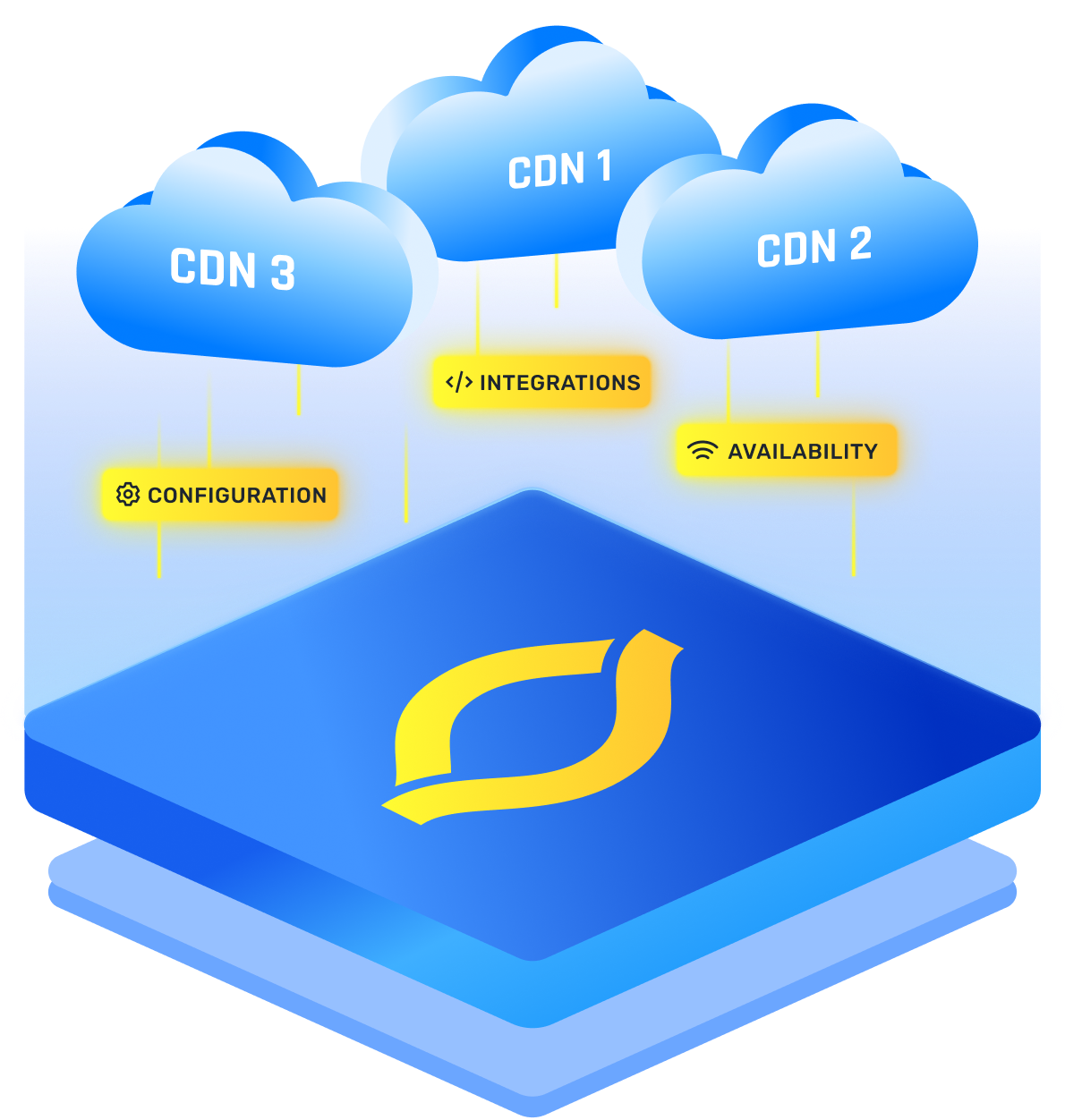

CDN availability is a binary check, but CDN routing is a quality decision. If you steer traffic based on “is this PoP up?” you only prove it can answer a health probe. You do not prove it can serve a real user fast and reliably right now.

A PoP can be “available” while overloaded, stuck behind a bad ISP path, dropping packets, or painfully slow on cache misses. Users experience that as “your site is broken,” even if your monitoring says everything is green.

The fix is to treat availability as a safety gate, then route using CDN performance signals like network latency, error rate, packet loss, and capacity.

Why Availability Alone Fails CDN Routing Decisions

Availability answers a narrow question: can the edge respond at all? Most checks are ping, TCP, TLS, or a synthetic HTTP fetch. That is useful, but it compresses reality into up or down.

Your users don’t live in that world. They live in “fast enough” or “I’m refreshing the page.”

Availability-based decisions miss problems that are common and user-visible:

- Overload and queueing: the edge responds, but slowly.

- Partial degradation: some nodes or paths fail, so issues look random.

- Upstream pain: cache misses fetch from a mid-tier or origin route that is struggling.

- ISP-specific routing: one network gets a clean path, another gets a detour.

I think of it like this: availability tells you the shop is open. It does not tell you whether there is a two-hour line.

CDN Architecture Makes “Up” An Incomplete Signal

A modern CDN architecture is a chain. Even if the front door works, the rest of the chain can be hurting.

A real request often goes:

- User connects to an edge PoP

- Edge checks cache

- Miss triggers a parent, mid-tier, or origin fetch

- Response is streamed back to the user

Many “healthy” checks mostly validate step 1, and sometimes a tiny cached object in step 2. Real traffic hits steps 3 and 4 constantly, especially for dynamic pages and long-tail assets.

So you can route to an “available” PoP where every cache miss is slow because the upstream path is congested or your origin is rate-limiting. The edge stays up, the experience still collapses.

Gray Failures Beat Availability Checks

Most incidents are not full outages. They are gray failures: the system is technically responding, but the experience is degraded for a meaningful slice of users.

Availability-only routing is blind here because nothing crosses the “down” threshold.

Common patterns:

- Tail latency spikes where p50 looks fine but p95 is painful

- Packet loss or jitter that causes stalls and retries

- Peering issues that affect only certain ISPs or regions

- Hot spots where one PoP is saturated and nearby PoPs are calm

This is why CDN performance has to be measured like a user feels it, not like a server answers a probe.

How it Works

If you only ask “is it up,” every row below can still be routed into.

What Better CDN Routing Uses Instead

Keep CDN availability, but demote it to a guardrail. First exclude PoPs that are truly unhealthy, then rank the remaining ones by user-relevant signals.

The signals that usually matter most:

- network latency (including p95, not just average)

- connection setup time (TCP, TLS, QUIC)

- edge error rate (timeouts, resets, 5xx)

- packet loss and retransmits

- cache hit ratio plus origin fetch latency on misses

- PoP headroom (CPU, bandwidth, queue depth)

Where do you get those signals? Ideally from a mix of real user monitoring (what browsers and apps actually saw), targeted synthetic probes from multiple regions, and internal PoP telemetry. I’ll trust a “real users on ISP X are spiking” alert far more than a single green health check from one vantage point.

This is where CDN routing becomes personal: the “best” PoP depends on the user’s network, location, and current internet conditions.

Practical CDN Strategies That Actually Work

Here are CDN strategies that prevent “available but awful” routing without making your system fragile.

- Gate With Availability, Then Steer With Performance

Availability removes broken targets. Performance chooses the best target. If you blend everything into one fuzzy score, you can accidentally send traffic to a barely-alive PoP. - Optimize For The Tail, Not The Median

Users complain about p95 and p99. Route using tail latency and real error rates, not just “it responded once.” - Add Stability Controls

Fast switching can cause flapping and herding. Use hysteresis, cooldowns, and gradual shifts so routing changes are calm and reversible. - Respect Capacity And ISP Reality

A PoP near saturation is a future incident, even if it is up. Also, do not assume one map fits all: ISP-aware steering avoids sending certain networks into consistently bad paths.

Do that, and availability stops being your decision-maker and becomes what it should be: the minimum bar, while routing focuses on delivering a good experience.