Optimizing CDN Architecture: Enhancing Performance and User Experience

CDN architecture greatly improves availability, reduces latency, and optimizes traffic routes for more enhanced performance. In this blog, we break down various CDN architectures and their pros and cons, define the four pillars of CDN design, and introduce a disruptive solution that provides consistent security and traffic control services on top of multiple vendors without compromising your Edge services. How? Keep reading.

What is a CDN?

A content delivery network (CDN) is a distributed network of servers strategically located across multiple geographical locations to deliver web content to end users more efficiently. CDNs cache content on edge servers distributed globally, reducing the distance between users and the content they want.

CDNs use load-balancing techniques to distribute incoming traffic across multiple servers called Points of Presence (PoPs) which distribute content closer to end-users and improve overall performance.

What is CDN Architecture?

CDN architecture serves as a blueprint or plan that guides the distribution of CDN provider PoPs. The two fundamentals of a CDN architecture revolve around distribution and capacity.

The distribution aspect determines how widely the PoPs are scattered and how effectively they cover different regions. Capacity determines how much content it can store in its cache and how efficiently it can serve that content to users simultaneously.

The data center's capacity is dependent on factors such as CPU, memory, bandwidth, and the number of machines.

CDN architecture also focuses on caching, load balancing, routing, and optimizing content delivery, which can be measured by: cache offloading and round-trip time (RTT).

RTT is the duration in milliseconds (ms) it takes for a data packet to go from a starting point to a destination and return back to the original starting point. A lower RTT indicates a faster network response time and happier end users.

Cache offloading accurately determines the cache's ability to provide content without requiring the content from the origin.

And if it’s one thing a customer literally doesn’t have time for, it’s downtime. Five Nines availability or 99.999%, also referred to as "the gold standard" significantly reduces downtime (5.26 minutes of annual downtime to be precise) and ensures that critical operations can continue to flow without disruption.

All these elements combined serve as the blueprint of a CDN architecture.

{{promo}}

The Core Components of CDN Architecture

To achieve optimal CDN performance, several core components must be integrated into CDN network architecture:

1. Caching Mechanisms

Caching is at the heart of CDN operations. It involves storing copies of content on edge servers closer to end-users.

Effective caching reduces latency and offloads traffic from the origin server. Advanced caching strategies, such as cache hierarchies and dynamic content caching, further enhance performance by ensuring frequently accessed data is always readily available.

2. Load Balancing

Load balancing distributes incoming traffic across multiple servers to avoid overloading any single server. This not only improves performance but also enhances reliability by ensuring that if one server fails, others can take over seamlessly.

Modern CDNs employ sophisticated algorithms to manage load balancing, taking into account real-time traffic conditions and server health.

3. Security Features

Security is a fundamental aspect of cdn architecture. CDNs incorporate various security measures such as DDoS protection, Web Application Firewalls (WAF), and SSL/TLS encryption to safeguard data and ensure secure content delivery.

These features protect against cyber threats and ensure data integrity and privacy for end-users.

4. Real-Time Analytics

Real-time analytics provide insights into traffic patterns, user behavior, and system performance.

By continuously monitoring these metrics, CDNs can dynamically adjust configurations to optimize content delivery. Analytics also help in identifying potential issues before they impact users, allowing for proactive management.

5. Global Distribution of PoPs

The strategic placement of PoPs is essential for minimizing latency. A well-distributed CDN network architecture ensures that content is always served from a location geographically closest to the user.

This global distribution is particularly important for serving a diverse, international audience and maintaining consistent performance across different regions during different times.

The Four Pillars of CDN Design

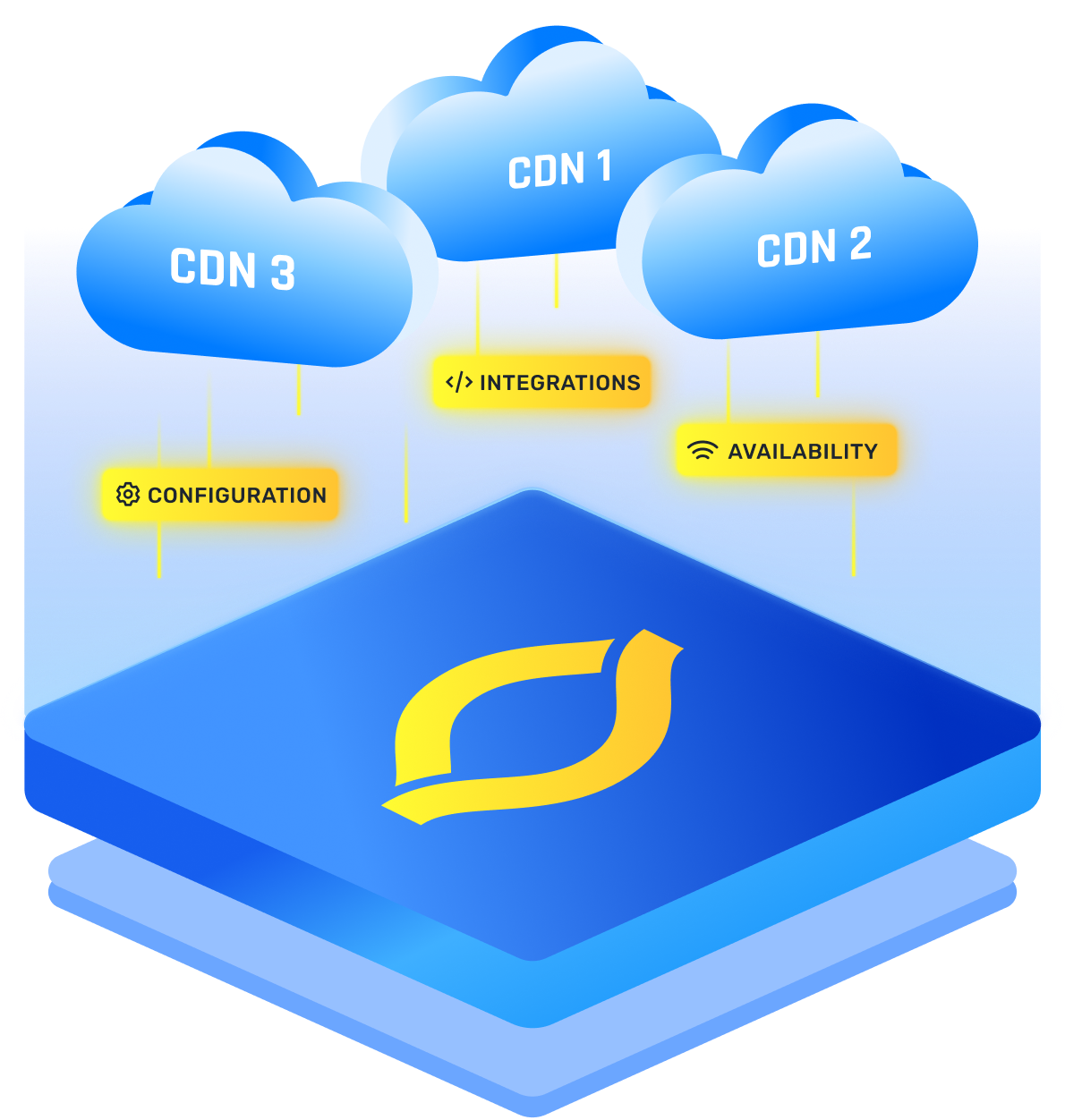

CDN architecture can be broken down into several building blocks, known as the Four Pillars of CDN Design.

Reliability

Reliability is the foundation of maintaining a consistent user experience. When an edge server goes down, end users in the affected region may experience an increase in latency for that specific location. This is because their requests need to be rerouted to an alternative server, which could be much farther away from the user's location.

The CDN should be designed with propagation as it enhances content availability, mitigates the impact of server failures, minimizes latency due to traffic rerouting, and greatly assists in the recovery and resilience of the CDN.

Performance

The number and distribution of PoPs play a crucial role in performance. Having more PoPs in diverse locations reduces latency by bringing content closer to end-users, and minimizing the distance data needs to travel.

Ensure that your CDN provider has a broad and well-distributed network coverage with an extensive number of PoPs. A larger network footprint allows for content to be cached closer to end-users, reducing latency and improving performance.

Scalability

When planning for scalability, it’s essential to evaluate your business roadmap and identify target regions where you plan to expand. Your CDN should have edge servers strategically placed in these locations. These optimizations ensure that your CDN can handle growing user demands while maintaining fast and efficient content delivery.

Responsiveness

Edge caching is another fundamental building block that helps enhance responsiveness. By caching frequently accessed content at edge servers within PoPs, CDNs reduce the need for content retrieval from the origin server. This minimizes response times, improves content delivery speed, and enhances overall responsiveness for your end-users.

{{promo}}

CDN Topology

CDN topology describes how the network is organized and how its components are interconnected to efficiently deliver content to end-users. Here are a few examples.

Centralized CDN

In a centralized CDN, the emphasis is on larger PoPs strategically located in key countries/cities, while a distributed CDN aims to have a presence in several PoPs in every city to minimize the distance between content servers and end users.

Advantages

- Since the PoPs are much larger, there is a significant increase in the cache capacity at the Edge.

- Agile configuration deployment - since there are much fewer PoPs the configuration deployments are much simpler and faster

- Reduced maintenance costs - the CDN is required to maintain the presence in much fewer data centers

Disadvantages

- Higher RTT due to fewer PoPs. On average, the “edge” PoPs of a centralized CDN tend to be located farther away from the end user compared to a distributed CDN.

- Inconsistencies in performance across different regions - a small amount of PoPs might create significant differences in performance for different geo-locations.

The Distributed CDN

In a Distributed CDN, PoPs are strategically positioned or scattered in as possibly more different regions or network locations to minimize latency and improve content delivery performance. The focus is on providing optimal physical proximity, so it’s not uncommon to see many PoPs grouped together within a small radius of each other.

Distributed CDN is more affected by the local networks infrastructure they lease, there is a significant disparity between developing countries and developed countries.

Advantages

- Closer physical proximity minimizes latency (RTT) - in distributed CDN the PoPs are as close as possible to end user

- Faster speeds in low-connectivity areas - the impact of distributed CDN becomes even higher in low-connectivity areas, since in these areas the RTT to centralized CDN edge PoP is significantly higher compared to distributed CDN.

Disadvantages

- Distributed PoPs create more complexity and increase maintenance costs - required the CDN to maintain present in more data centers

- Deploying new configurations is more cumbersome - since the network is much more distributed, configuration updates, purges and more operations are taking more time (more locations and servers should be in sync).

- Due to the aim of getting as close as possible to the end-user in a distributed architecture, Cache Management becomes an issue. Each PoP tries to keep content as 'hot' as possible, which leads to many small PoPs with relatively small coverage areas holding the same content.

In this scenario, a Cache Miss will cause the PoP to access a remote Data Center to fetch the content.

- Higher cache miss on edge compared to a centralized solution - in distributed CDN the PoPs are much smaller compared to a centralized CDN and therefore the chance for cache misses on the Edge increases. Although, Cache Miss is even worse thanks to the Cache management issue mentioned above.

In the early days of the CDN (Content Delivery Network) industry, successfully constructing a network that brought content as close as possible to the end-user was considered a significant commercial achievement. However, as the years passed, the quality of infrastructures improved significantly, reducing the advantages of Distributed CDNs compared to Centralized systems.

CDN Architecture Optimization

If you opt for a distributed CDN, you might want to consider utilizing cache tiers within the CDN. Cache tiers are a way to organize the caching infrastructure to improve cache hit rates and overall performance. This is where Origin Shield comes into place.

Origin Shield is a crucial component of the cache tier architecture in a distributed CDN. It is a caching mechanism utilized in CDNs to prevent the origin server from being overwhelmed by a high volume of requests during cache misses.

The Origin Shield acts as a buffer in the middle. It drives down the number of requests sent to the origin server to reduce cost and improves the overall efficiency of content delivery within the CDN topology.

.webp)