DNS-Based vs Application-Level Traffic Control for CDNs

DNS vs application-level CDN traffic control explained. Compare routing, speed, resilience, and cost control.

You press Enter. The page shows up. That calm moment hides a noisy back room. A system just chose one server out of many. That choice decides speed, uptime, cost, and how much control you keep when something breaks.

When you run a CDN, traffic control is your steering wheel. You can steer early, before any connection exists, using DNS. You can also steer later, after an edge node can see the real request, using Layer 7 logic. Both approaches solve real problems. Both can also surprise you if you expect perfect behavior.

The Four Moments Where Steering Can Happen

A request goes through a short chain. Each link gives you a different kind of control.

- Name lookup

Your user asks DNS for an IP address. DNS can return different answers for different users. - Network entry

Packets travel to that IP. If the IP uses Anycast, the internet chooses a nearby edge location. - Edge handshake

The user connects and finishes TLS. Now an edge server can measure live timing and errors. - Request handling

Now the edge can read paths, headers, cookies, and query strings. Now routing can depend on what the user asked for.

Keep one rule in your head: the later you decide, the more context you can use. The earlier you decide, the more you depend on caches and network guesses.

A Quick Comparison You Can Point At

Instead of thinking “DNS or Layer 7”, think “where does the choice happen”.

- DNS answer time

Change: A and AAAA records

See: Resolver location, basic health

Slowdown: Caching outside your control - Anycast entry

Change: BGP route announcements

See: Network topology only

Slowdown: Peering policy, route updates - Edge Layer 7

Change: Per request routing

See: Full request, real measurements

Slowdown: CPU cost, rule mistakes - Control APIs

Change: Weights and policies

See: Your own metrics

Slowdown: Rollout speed, safety checks

{{promo}}

Steering Before The Connection Exists

Most DNS steering is a Global Server Load Balancer. The DNS server for your domain does not return one fixed IP. The DNS server returns an IP that matches a rule.

That is DNS-based traffic routing in plain terms: you steer by choosing the address your user receives.

What You Gain With DNS Control

DNS gives you explicit steering. You can decide, on purpose, where traffic should go. You can also do that without changing application code.

The wins usually look like these:

- Weighted splits

You can send 80 percent of traffic to one region and 20 percent elsewhere. - Slow and safe migrations

You can move traffic in steps while caches warm up. - Provider switching

You can swap CDN vendors with a DNS change. - Location fencing

You can keep traffic inside a country or inside a compliance zone.

Where DNS Starts To Hurt

DNS is fast because of caching. The same caching makes steering slow.

If a region fails and you update DNS, many users keep the old answer until cache time expires. Even with a low TTL, some resolvers keep records longer than you asked. Some devices also cache in their own way.

That creates the long tail problem. A chunk of users recover fast. Another chunk keeps hitting the dead place for longer than you want.

DNS also has a visibility gap. The DNS server often sees the recursive resolver, not the user device. If the resolver sits far away, your location guess can be wrong, and latency can climb.

There is a feature called EDNS0 Client Subnet that can help mapping. Adoption varies, and privacy settings can remove the hint. So you cannot assume the hint always arrives.

How To Use DNS Without Getting Trapped

Two habits make DNS steering safer.

- Use DNS for coarse choices

Let DNS pick a provider or a broad region. Let the edge handle fine decisions.

- Treat TTL as a hint, not a promise

Design for a few minutes of stale answers. Your failover plan should still work.

Steering After You Can See The Request

Once the request reaches an edge node, the edge usually acts like a reverse proxy. The edge terminates TLS, reads the request, then decides what happens next.

That decision is application-level traffic control. You steer with request data and live performance signals.

What Layer 7 Can Do That DNS Cannot

DNS can only choose an address. Layer 7 can choose a path, a backend, a cache rule, and a retry plan.

Four common moves become possible:

- Request aware routing

You can treat /static and /api differently, and you can treat paid users differently. - Experiment routing

You can send beta traffic to a canary cluster based on cookies or headers. - Live failover inside the edge

If one origin pool fails, the edge can retry another pool in seconds, without touching DNS. - Connection reuse

The edge can keep warm connections to origin, which saves time on backend handshakes.

What You Pay For That Power

Layer 7 control costs CPU and complexity. The edge has to decrypt and parse. Rules have to be tested.

The overhead usually shows up as:

- More work per request

TLS and parsing take resources. - More moving parts

Retries, timeouts, safety cutoffs, and pools need care. - More risk from mistakes

One bad rule can create loops or leak cache. - More tooling needs

You need testing, versioning, safe defaults, and rollbacks.

Making The Middle Mile Less Random

Even if your edge is close to the user, your origin may be far away. The edge to origin path is the middle mile. Public routes can be congested, and BGP can pick a path that looks cheap, not fast.

Large CDNs often solve this with an overlay network. Traffic enters the CDN, rides a private backbone or tuned links, then exits near your origin. The edge picks paths using live measurements from real traffic, not only static maps.

You do not need to memorize vendor names to understand the idea. The key point is that Layer 7 logic can pick better middle mile paths than default internet routing.

Anycast also plays a role. Anycast is a network layer trick. Many cities announce the same IP range. The internet routes each user to a nearby edge location.

Anycast helps in two places:

- Front door failover

Traffic can move away from a dead site without waiting for DNS cache expiry. - Attack dilution

A big DDoS can spread across many edge locations, instead of crushing one link.

Anycast can still misroute some users because peering policy matters. That is why many teams use DNS for a broad choice, then rely on Anycast inside that boundary.

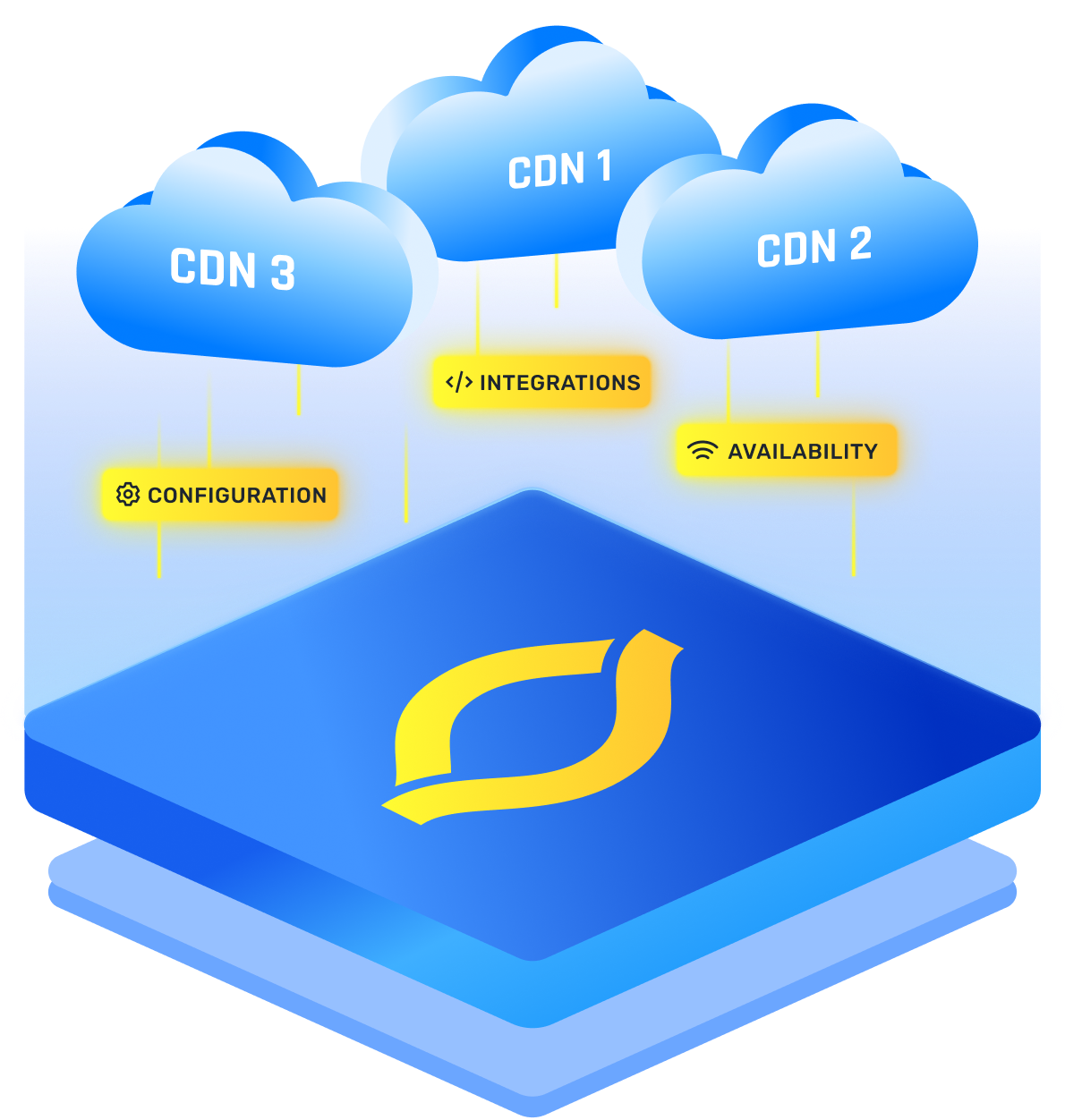

Why Most Teams Combine Both Layers

Most teams do not pick DNS or Layer 7. They combine both and assign each layer a job.

This is CDN and DNS integration when the system is designed on purpose, not by accident.

A clean hybrid model looks like this:

- DNS picks the outer boundary

DNS selects the CDN vendor, or selects a broad region. - Anycast gets the user to an edge

The network brings packets to a close edge location. - The edge makes request level choices

The edge decides cache, compute, routing, and retries. - Overlay routing tunes edge to origin

The CDN picks a good middle mile path based on live data.

Now you can explain the stack in a simple sentence: DNS chooses where to enter, and the edge chooses how to serve.

When You Use More Than One Provider

If you want higher resilience, you may use more than one CDN. That approach is common for high uptime goals, strict performance targets, strong cost control, and compliance needs.

That is multi CDN traffic steering. You spread traffic across vendors, and you keep the option to shift fast.

DNS is usually the main switch, because each vendor owns different IP space. DNS can choose which vendor answers the first connection.

But DNS should not be your only lever. You also have APIs and config tools inside each vendor. This is where DNS vs API traffic management becomes real work, not a theory.

A simple way to split responsibility looks like this:

- Use DNS for big moves

Vendor selection, regional shifts, large percentage changes, and planned cutovers. - Use APIs for fine moves

Origin pool weights, cache rules, per URL path behavior, and fast fixes during incidents. - Use automation for repeatable moves

Templates, approvals, staged rollouts, and quick rollback paths. - Use monitoring gates for every move

Error budgets, latency targets, rate of change, and alert checks.

{{promo}}

Playbook You Can Run At 2 A.M.

You want a plan that works under stress. Here is a four part playbook that is easy to follow.

- Watch four signals

Edge error rate, origin error rate, end user latency, and cache hit ratio. - Fail inside the edge first

If one origin path fails, retry another pool inside the CDN before you change DNS. - Shift with weights, not one big switch

Move traffic in steps so you can stop early if metrics get worse. - Use DNS for the broad escape hatch

If a whole vendor or region is sick, DNS can move new sessions elsewhere, even if caches delay some users.

Myths That Cause Painful Outages

These beliefs show up in incident calls. If you catch them early, you save yourself time.

- DNS changes are instant

DNS caches mean some users will lag behind your change. - Anycast always means lowest latency

Peering policy can send users to a location that looks close on paper, but feels slow in real life. - Layer 7 routing is always slower

Layer 7 adds work, yet smart retries and better middle mile paths can reduce total page time. - Multi CDN means you lose control

With clear weights, good monitoring, and safe automation, you can gain more control, not less.

Picking A Steering Plan You Can Defend

Your CDN routing strategies should match your risks and your team size.

You do not choose a steering method based on fashion. You choose based on the failure modes you fear and the control you need every week.

Lean More On DNS When

- You want clean portability

A vendor swap should be a DNS change, not a client update. - You need clear percentage steering

Weights help with cost planning and capacity limits. - Your workload is mostly cache friendly

Static pages, images, file downloads, and simple redirects benefit more from caching than from deep logic. - You want simple operations

Fewer moving parts can be safer for small teams.

Lean More On Layer 7 When

- You serve many request types

Static pages, API calls, personalized pages, and media streams behave differently. - You need fast and precise recovery

Retries and reroutes inside seconds matter for user sessions. - You run frequent experiments

Routing based on headers, cookies, query strings, and user segments makes rollouts safer. - You want better origin paths

Overlay routing can cut latency when origin sits far away.

Use A Hybrid Model When

- You want multi vendor resilience

DNS selects the vendor, then the edge handles the fine routing. - You need both explicit policy and real context

DNS gives deliberate steering, and Layer 7 gives live decisions. - You want layered safety

A mistake in one layer has less chance to break everything. - You face mixed risks

DDoS, regional outages, origin failures, and sudden traffic spikes need different tools.

Conclusion

DNS feels simple because the choice happens early. Layer 7 feels powerful because the choice happens with context. In practice, you get the best outcome when you stop treating the two approaches as rivals.

DNS-based traffic routing remains valuable for broad steering and vendor choice. application-level traffic control wins when you need fast decisions and request level logic.

FAQs

1. What Is DNS-Based Traffic Routing In A CDN?

DNS-based traffic routing is when your authoritative DNS returns different IP addresses based on rules like region, load, or health. You are steering traffic before the user opens a connection, which is why DNS caching and TTL behavior can affect how quickly changes take effect.

2. What Is Application-Level Traffic Control For CDNs?

Application-level traffic control happens at the CDN edge after the request arrives. The edge can read the URL path, headers, cookies, and live performance signals, then route the request to the best cache, origin pool, or backend based on real context.

3. DNS Vs API Traffic Management, Which One Should You Use?

Use DNS when you need big, deliberate moves like selecting a CDN provider or shifting traffic between regions. Use APIs when you need fast, fine-grained changes like adjusting origin pool weights, applying rule updates, or responding to incidents without waiting on DNS caching.

4. How Does Multi CDN Traffic Steering Usually Work?

Multi CDN traffic steering usually starts with DNS choosing which CDN the user connects to, since each CDN owns different IP space. After the user lands on the chosen CDN, application-level controls at the edge handle per-request routing, retries, and origin selection.

5. Why Can DNS Failover Feel Slow During Outages?

DNS failover can feel slow because resolvers and devices cache DNS answers. Even with low TTL values, some resolvers enforce minimum caching times, which creates a long tail where part of your traffic keeps hitting the old endpoint longer than you expected.

6. What Are The Best CDN Routing Strategies For High Availability?

A strong approach is a hybrid model: use DNS for coarse steering and multi-CDN decisions, use Anycast to get users to a nearby edge with fast network-level failover, and use application-level traffic control at the edge for request-aware routing and rapid origin failover.