What Challenges Emerge When Managing Traffic across Multiple CDNs?

Table of contents

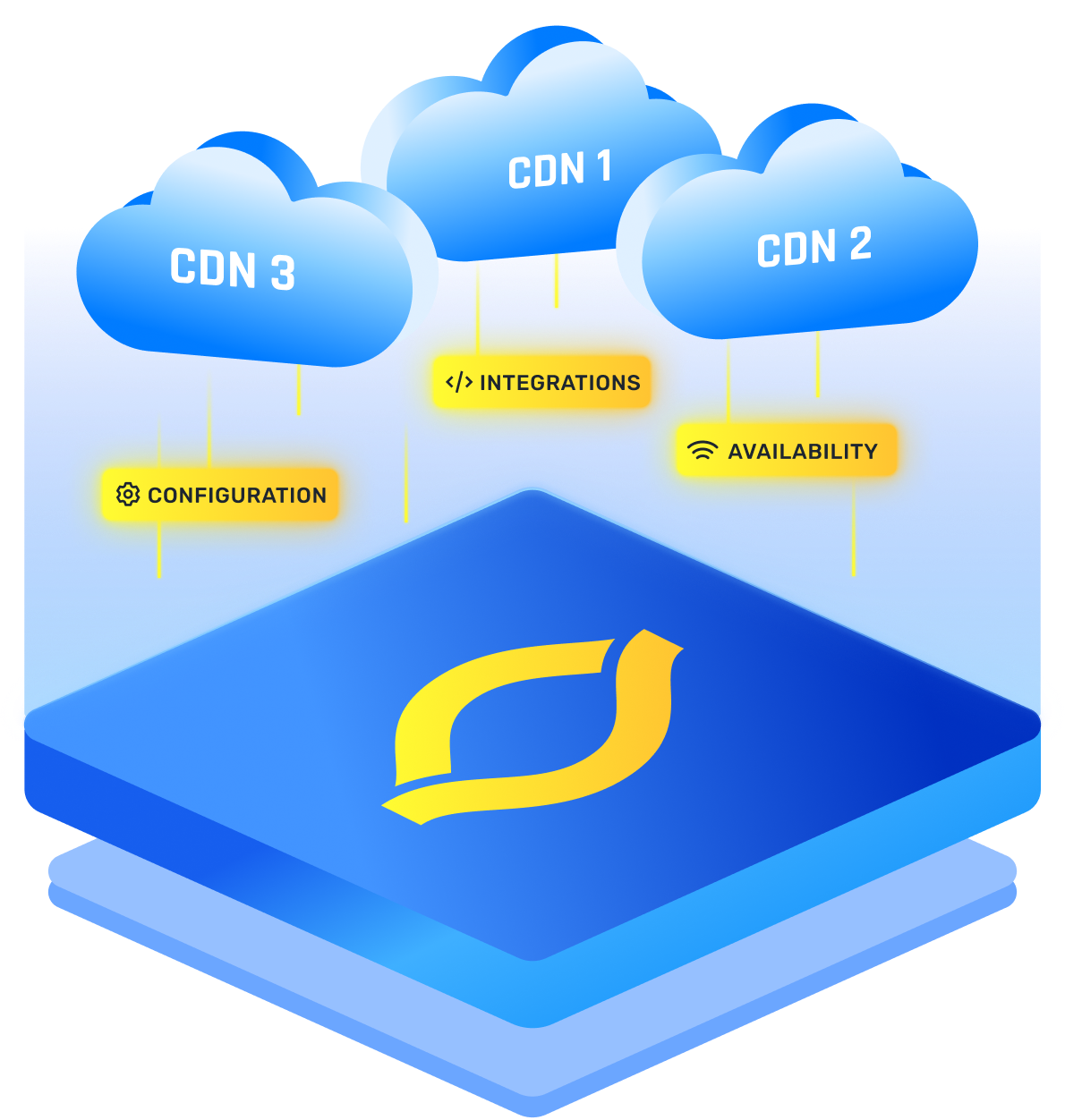

Running CDN traffic across more than one provider can improve resilience, but it also creates new failure modes. The hard part is not adding a second CDN, it is traffic management: steering requests consistently, keeping caches and security rules aligned, and proving with data that your multi-CDN strategy is actually helping.

If you do it casually, the biggest CDN challenges are inconsistency, slower debugging, and surprise cost.

Traffic Steering And Routing Pitfalls

Most problems start with steering. DNS routing is “easy,” but your decision is made by recursive resolvers, not always where the user is. A mobile user, a roaming laptop, or a big enterprise behind centralized DNS can get mapped to the wrong region. Low TTLs reduce the damage, but you cannot force every resolver to honor them, so rollback can lag.

Performance-based routing adds another risk: flapping. If you shift traffic to CDN A because it looks 20 ms faster, A can get loaded, slow down, and your controller shifts back to B. That loop is common when multi CDN switching has no hysteresis, minimum dwell time, or region-level isolation.

Health checks also lie. A CDN can return 200 OK for a test file while real users fail on TLS, specific ISPs, large objects, or certain paths.

Observability And Metric Normalization Challenges

In multi-CDN, measurement stops being apples to apples. Each provider defines and samples metrics differently, and retries can hide errors. One dashboard can show “low error rate” while users see timeouts, because the CDN retried at the edge and only the origin saw the pain.

You also inherit a correlation problem. To debug quickly, edge logs, WAF logs, origin logs, and app logs need a shared request ID, consistent client IP handling, and time sync. Without that, every incident becomes “it is slow sometimes,” and you spend hours proving where the slowdown lives.

Cache Consistency And Purge Complexity

Cache is where you feel inconsistency. Users can see different versions of the same content depending on which CDN answered them.

Purge is the classic trap. Purge APIs, limits, and propagation timing vary per provider. If you purge CDN A and forget CDN B, or if CDN B takes longer in one region, you get a split-brain experience: support tickets that say “the site updated for my coworker but not for me.”

Cache keys drift too. Query strings, cookies, and header variations (like Accept-Encoding or device headers) do not behave identically unless you force them to. When you shift traffic, you are not just moving users, you are moving them between two cache universes.

Origin Load And Failover Surprises

A multi CDN solution can protect users but punish your origin if you do not plan for cache cold starts. If 90 percent of traffic normally goes to CDN A, CDN B’s cache is often cold. During failover, B has to fetch a lot more from origin, fast, and your origin can buckle.

CDNs also differ in how they talk to your origin:

- Retry behavior can be conservative or aggressive.

- Connection reuse and timeouts vary, changing origin socket pressure.

- Origin shielding may not be equivalent, changing backhaul traffic.

I size and rate-limit the origin assuming failover day, not average day, because that is when reality shows up.

Configuration Drift And Feature Parity Gaps

Two CDNs means two rule engines and two sets of defaults. Even if you start identical, configs drift: a hotfix lands on CDN A during an incident, and CDN B never gets it. Months later you have “mystery behavior” that only appears for a portion of users.

Feature parity is another hidden cost. If you want consistent delivery, you often use the least-common feature set across both CDNs. That can block you from using better HTTP/3 coverage, stronger image optimization, or richer edge compute on one side, unless you accept two different stacks and twice the testing.

Security, Compliance, And Policy Consistency

Security gets harder because “almost the same rules” is not the same protection. WAF rule sets, bot scoring, and rate limiting knobs differ by vendor. If you tune false positives on one CDN but not the other, you will block real users on one path and let abuse through on the other.

TLS operations multiply too: certificate deployment, cipher policies, HSTS and security headers all need to match. If you have compliance requirements, you also need to track where each CDN stores logs, how long, and whether headers with personal data are handled consistently.

Incident Response And Cost Modeling Challenges

Operationally, incidents slow down because you now coordinate two vendors, two status pages, and two “it is not us” narratives. Your runbooks must cover partial failures, not just “CDN down,” and they must define when to shift traffic globally versus per region or ISP.

Cost is also trickier than “pick the cheaper one.” Billing models differ (per GB, per request, per region, per feature), and steering changes cache hit ratio and origin fetches. You can lower CDN egress and still raise total spend if the route you chose causes more misses, more retries, or more logging and security charges.

.png)

.png)

.png)