How Do OTT Platforms Test CDN Failover Scenarios?

Table of contents

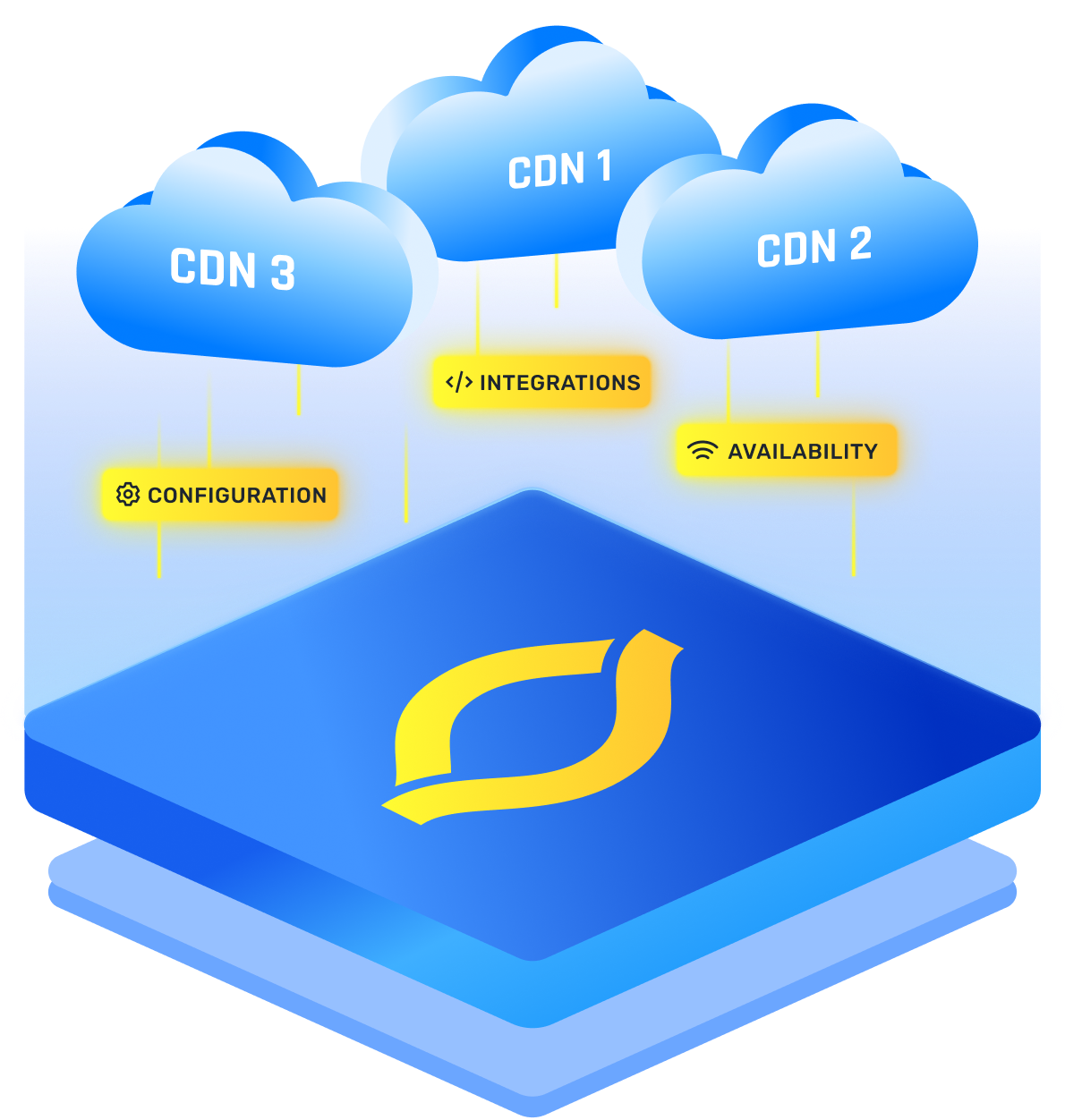

OTT platforms test CDN failover by running a multi-CDN setup, then deliberately making the primary CDN fail in controlled ways, and proving playback keeps going with minimal buffering and only a brief quality dip. If your test only proves traffic moved, it is incomplete.

The stream has to survive the switch in routing, in segment delivery, and inside the player. I treat failover as a feature: validate it with data, not hope.

Where Failover Actually Happens

Most CDN failover testing fails because you assume it is one switch. In production, it is a chain of decisions, and each link breaks differently.

In real incidents, the first sign is often slow segments, not a clean outage. Your player’s retry and timeout choices decide whether you glide to a backup CDN or stall until buffers drain.

A practical rule: if you cannot describe which layer triggers first and which layer catches second, your multi CDN failover design is guesswork.

Two details that matter more than people expect:

- DNS caching is messy. TTL is a hint, not a guarantee, and ISPs and devices re-resolve differently.

- “CDN up” does not mean “playback healthy.” A CDN can return 200s while being too slow to keep buffers full.

That is why OTT cdn testing needs player telemetry, not just CDN graphs.

The Failover Scenarios OTT Teams Actually Run

You do not want one dramatic outage test. You want repeatable scenarios that mirror real failure modes.

If you are protecting premium OTT user experience, focus on three outcomes: startup time stays sane, buffering stays rare, and quality recovers quickly after the switch.

How Failures Get Injected Without Burning Production

You can test in production safely if you scope it and keep a fast rollback. I start with cohorts and get harsher only after the basics look good, using canary traffic so the blast radius stays small.

Common approaches:

- Weighted steering: move 1 percent, then 5 percent, then 10 percent to the backup CDN.

- Scoped host overrides: force a geography, ISP, or test group onto an alternate hostname.

- Edge error injection: return 5xx for segment paths for only the cohort.

- Targeted DNS failure: use a test hostname and simulate timeout or NXDOMAIN to study resolver behavior.

If you cannot undo the failure in seconds, the test is too risky.

What You Measure To Prove Failover Worked

If you only measure traffic distribution, you will miss user pain. OTT traffic resilience lives in QoE metrics.

If your dashboards cannot correlate “CDN changed” with “QoE stayed acceptable,” you are debating opinions, not results.

You Only Need To Keep These In Mind

This loop covers CDN failover testing, new CDN onboarding, and tuning retry logic.

- Choose a small, identifiable cohort (1 percent is usually enough to see patterns).

- Baseline QoE on the primary path for that cohort.

- Confirm the backup path is equivalent: signed URLs or tokens, headers, TLS, cache keys, DRM, and logging.

- Inject one failure mode only (5xx or latency, not both).

- Watch player outcomes first, then infra. If rebuffer spikes, failover might “work” but the experience does not.

- Ramp gradually and segment results by region and ISP, because one bad peering path can hide inside a global average.

- Roll back and confirm recovery to steady state.

Two gotchas I always check during OTT platform testing and optimization:

- Cache key differences make one CDN look slow because it never gets hits.

- Aggressive timeouts cause flapping, where the player bounces between CDNs and feels worse than staying put.