How Can You Prevent Web Scraping Without Blocking Legitimate Users?

Table of contents

To prevent web scraping without irritating real visitors, you need to make automation expensive and normal browsing easy. That means watching behavior instead of relying on one blunt rule, slowing down suspicious sessions, challenging only risky traffic, and protecting the pages that expose the most data.

Trying to stop website scraping with one giant CAPTCHA, a single IP blocklist, or a hidden trick almost always hurts the wrong people. Good anti web scraping is quiet for humans and frustrating for bots.

Use Layered Defenses Instead Of One Big Block

You usually do not block web scraping with a single rule. You stop web scraping by stacking smaller controls that work together.

That matters because real traffic is messy. One person may come from a shared office IP. Another may browse quickly from a mobile network. A search engine crawler may be useful. A monitoring bot may be legitimate. If you treat all automation the same, you end up blocking people who are doing normal things.

I like to think of this as traffic triage. Not every request needs the same response.

This is the core of web scraping prevention. You do not try to make your whole site hostile. You make abusive behavior unprofitable.

One quick point here. Robots.txt is fine for telling polite crawlers where to go, but it does not protect your data. Anyone serious about scraping can ignore it. So if your goal is to prevent web scraping, think enforcement, not hints.

Rate Limit Behavior, Not Just IP Addresses

This is where a lot of sites get it wrong.

If you only rate limit by IP, you will catch schools, offices, co-working spaces, and mobile carriers where many real people share one address. That is how you end up hurting good users while a scraper just rotates through proxy IPs and keeps going.

A better approach is to rate limit based on behavior and context:

- Per path

- Per session

- Per account

- Per API token

- Per cookie

- Per browser or device pattern when your stack supports it

So instead of saying, “This IP made too many requests,” you are saying, “This session hit 200 search pages in two minutes,” or “This account requested product IDs in a perfectly sequential pattern.”

That is far more useful.

For example, your homepage may deserve very loose limits. Your search results, public directory, pricing pages, or product listing pages probably need much tighter ones. Those are the routes scrapers love because one session can pull a lot of records quickly.

A few signals are worth watching closely:

- Very fast pagination through results

- Repeated requests to the same endpoint with tiny parameter changes

- Sequential ID lookups

- Lots of data requests with little or no asset loading

- The same browser pattern reappearing across rotating IPs

When users cross a limit, return a clean 429 response for APIs and slow or challenge browser traffic where it makes sense. That keeps the system predictable for real developers and frustrating for scrapers.

Challenge Only The Sessions That Look Wrong

A site-wide CAPTCHA sounds like protection, but it is usually just a tax on your own audience.

Most real people should never see a challenge. The better move is to challenge only when the session already looks wrong.

A human usually reads, pauses, scrolls, changes direction, opens a few pages, and behaves a little inconsistently. A scraper tends to be efficient, repetitive, and unnaturally fast. That difference is not perfect, but it is useful enough to guide your next step.

So instead of throwing a challenge at everyone, use it after a risk signal:

- Deep pagination at machine speed

- Hundreds of requests to a search or list endpoint

- Repeated filter changes with almost no reading time

- Sign-in attempts followed by instant bulk extraction

- Suspicious browsing patterns on data-rich pages

This is how you stop scrapers without punishing ordinary visitors. The challenge becomes a second layer, not the front door.

One practical detail matters here. Browser-style challenges belong on browser pages. APIs should usually be protected with quotas, tokens, authentication, signed requests, or stricter per-client limits, not random visual tests. Real apps hate CAPTCHA walls.

Protect The Pages Scrapers Actually Want

You do not need the same level of protection everywhere.

Most teams waste time protecting low-value pages evenly, while the routes that leak the most data stay wide open. If you want to stop website scraping without collateral damage, start where one automated session can collect the most value.

Usually that means:

This is also where exposure control matters. A scraper can only take what you actually serve.

So look at what each request returns. Do anonymous users really need 100 results per page? Do they need every field? Do they need deep page 200? Do they need a clean, structured endpoint that exposes more than the interface itself needs?

Often the smartest change is not a harsher block. It is a smaller response.

That may look like:

- Fewer results per page for anonymous visitors

- Tighter depth limits on pagination

- Less detail in public list views

- Full detail only after login or on the final page view

- Separate quotas for export-like actions

This is the part people skip when they try to block web scraping with a CAPTCHA alone. Reducing exposure will not make scraping impossible, but it can make it dramatically less efficient.

Let Good Bots Through On Purpose

Not every bot is your enemy.

Search crawlers, uptime monitors, and some partner integrations are useful. If you lump them together with abusive scrapers, you will spend half your time cleaning up self-inflicted damage.

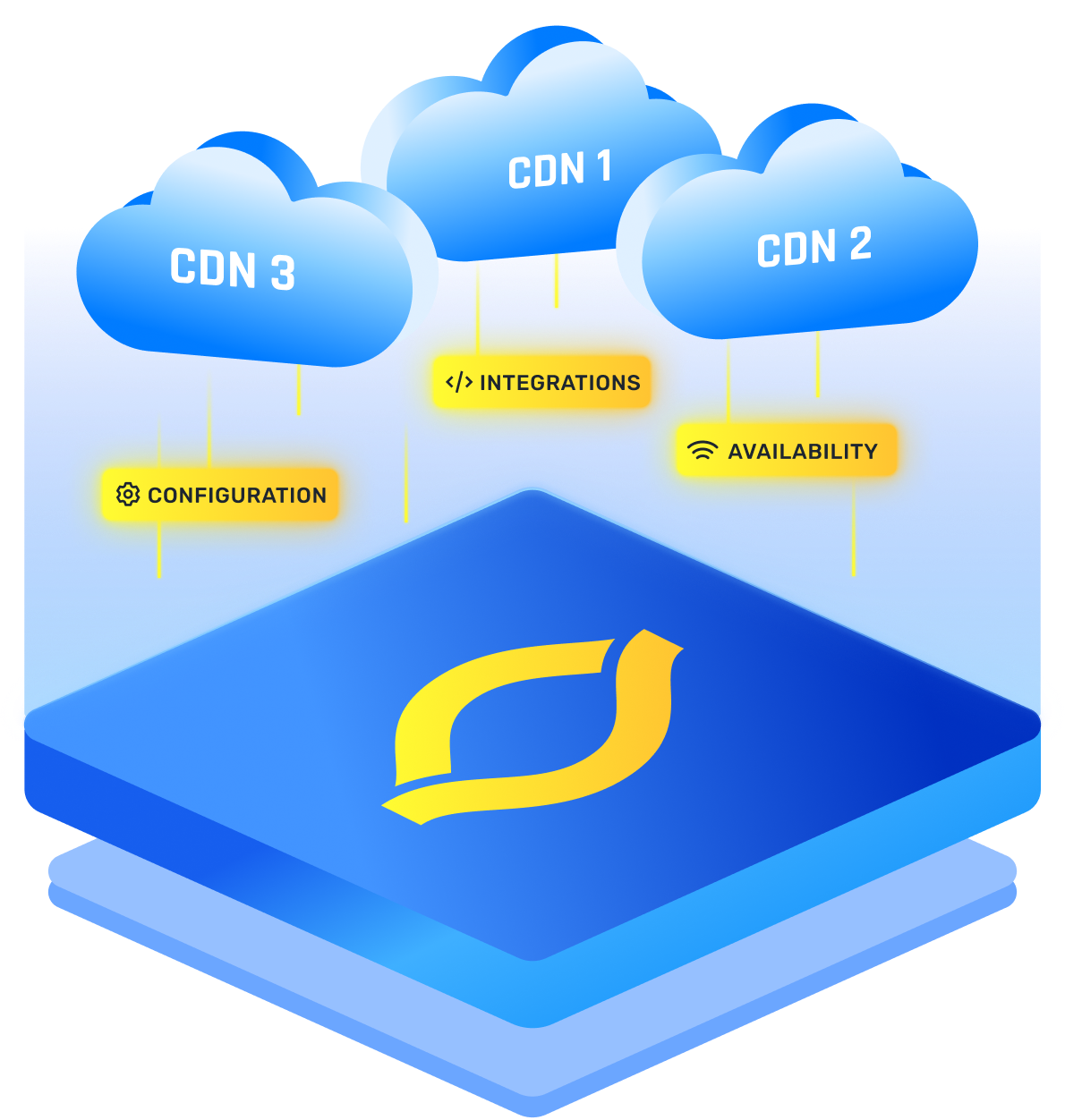

So keep a verified allow path for trusted automation. The key word is verified. Do not trust a user-agent string by itself, because anyone can fake one. You want some form of validation, whether that comes from your CDN, WAF, reverse DNS checks for major crawlers, signed requests, or a trusted integration path.

This helps legitimate users too.

Why? Because once good bots are separated from bad automation, your anti web scraping rules can be stricter where they should be. You are no longer afraid that every protective rule will accidentally hurt search visibility or break a partner service.

Treat Login As A Speed Bump, Not A Solution

A login wall can help, but it is not a full answer.

It will slow casual scraping, and it may reduce anonymous bulk collection, but it will not stop a determined scraper using throwaway accounts, stolen accounts, or cheap signups. So once traffic is authenticated, you still need quotas and behavior rules.

That means:

- Per-account limits

- Per-session limits after login

- Extra checks for new or low-trust accounts

- Tighter controls on account actions that expose lots of data

- Alerts when one account browses like a harvesting script

I would never assume that “logged in” means “safe.” It just gives you a better handle for enforcement.

Measure False Positives Before You Tighten The Screws

The fastest way to annoy real users is to deploy strict rules without watching what they break.

You want protection, but you also want normal browsing, search, signup, and checkout flows to stay healthy. So before you fully enforce new rules, watch them in monitor mode or soft mode where possible.

The numbers worth caring about are simple:

- How many sessions get rate limited on high-value routes

- How often challenges are shown

- How often real users pass those challenges

- Whether search, signup, or conversion rates drop after a rule change

- Whether support tickets suddenly mention access issues

This is how you keep the system honest. A rule that catches a lot of bots but crushes your own search page usage is not a good rule. A rule that quietly slows abusive sessions while real users barely notice is the one you keep.

What I’d Deploy First

If you asked me to harden a site this week, I would keep it practical:

- Identify the five to ten routes that expose the most data or the most expensive queries.

- Put tighter rate limits on those routes, based on session, account, token, and path, not just IP.

- Add selective challenges only for browser sessions that show clear scraping patterns.

- Verify and allow trusted bots separately so your protections stay strict without hurting SEO or integrations.

- Reduce anonymous bulk access by shrinking page size, limiting depth, and trimming unnecessary fields.

- Put public APIs and export-like endpoints behind explicit quotas and clear enforcement.

- Watch false positives before converting soft controls into hard blocks.

- Expand to the next most valuable routes once the first set stays clean.