What Metrics Should You Use To Evaluate CDN Performance Across Providers?

Table of contents

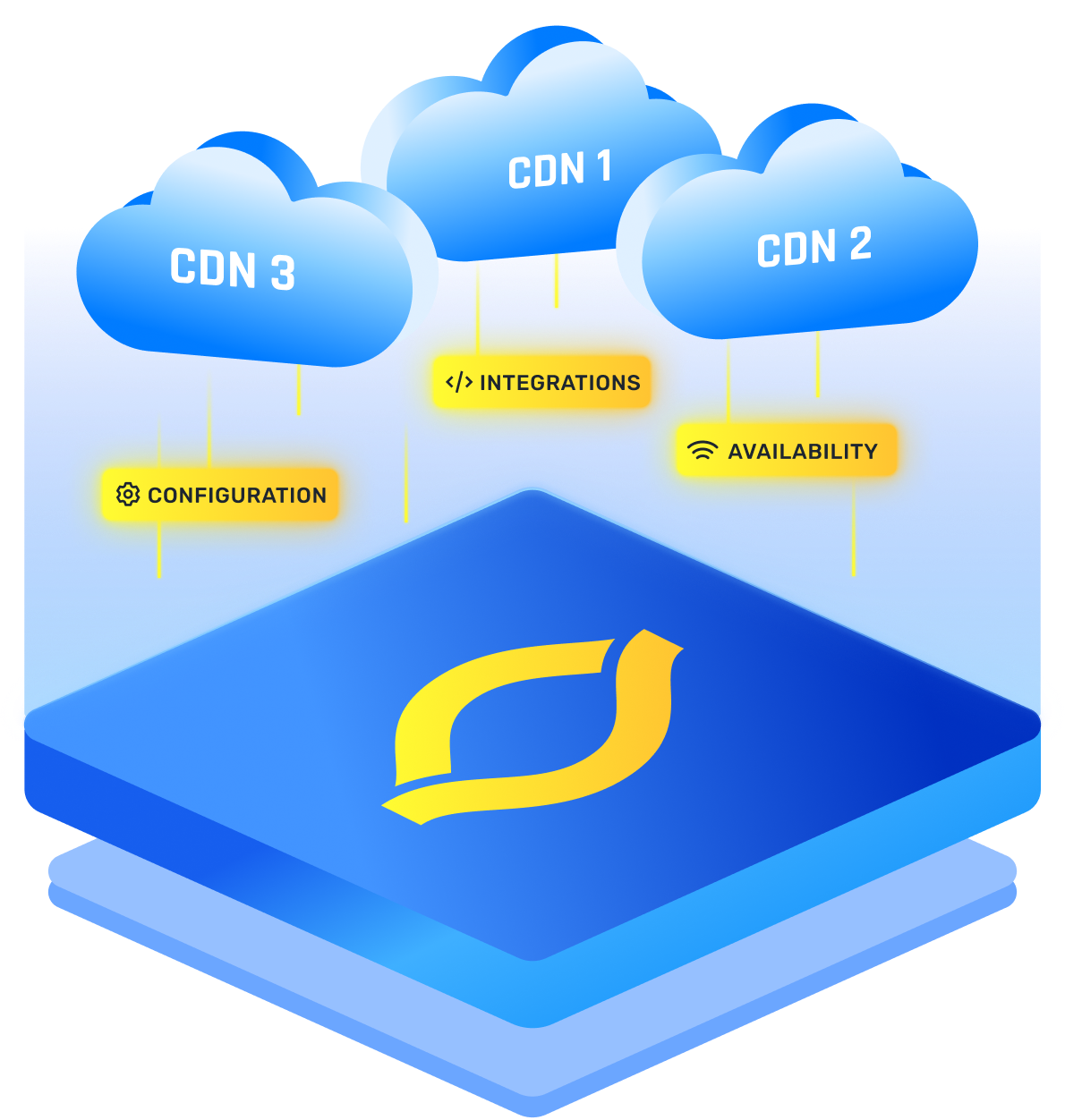

To compare any content delivery provider fairly, avoid the “one speed number” trap. You want a tight set of CDN performance metrics that capture user experience and operational reality: CDN latency in percentiles (p50, p95, p99), cache effectiveness (hit ratio plus origin offload), reliability (errors and timeouts), and throughput under load (does it stay fast when traffic spikes).

Then slice everything by geography and ISP, because “global average” can hide the exact market where your customers are stuck waiting.

One extra step makes the comparison meaningful: set your own targets first. For example, decide what p95 TTFB is “fast enough” for cache hits, what error rate you will tolerate, and how much origin offload you expect. Without those guardrails, it is easy to crown a winner that is fast in a benchmark but irrelevant to your real workload.

Do this and your CDN ranking will reflect what users actually feel.

CDN Latency Metrics That Reflect Real Speed

Averages lie. Tail latency is where complaints live, so score providers on p95 and p99.

The simplest model is: how quickly the first byte arrives, and how stable that is on messy networks.

One easy mistake: mixing hits and misses in one chart. Separate them, or you will reward a CDN for different caching behavior instead of actual speed.

Cache Efficiency And Origin Offload Metrics

You are not buying a CDN only for speed, you are buying fewer origin trips. If cache efficiency is weak, your site becomes a breeding ground for slowdowns, and they will not be tens, hundreds, or even thousands, but millions.

High-signal CDN metrics here are:

- Cache hit ratio (requests) and byte hit ratio (bytes)

- Origin requests per 1,000 user requests (simple offload score)

- Miss reasons (expired, bypassed, uncacheable, query variance)

- Revalidation rate (hidden “check origin anyway” behavior)

When two providers are close on latency, better offload usually wins because it reduces tail spikes and keeps your origin calm.

Reliability Metrics That Keep Users From Seeing “Broken”

A fast CDN that fails is still a bad experience. Track reliability as rates, and break out edge versus origin.

Focus on:

- Success rate (2xx and 3xx as a percentage of total)

- Edge 5xx rate (CDN-generated errors)

- Timeout rate (often worse than a clean 5xx)

- Regional error concentration (one country failing can vanish in global stats)

If you can only pick one reliability number, use “failed or timed-out requests per 10,000.” It is easy to explain and hard to spin.

Throughput And Load Handling Metrics

A CDN can look great at 100 requests per second and wobble at 10,000. Your evaluation should include controlled load tests and observation during real peaks.

Track:

- Max RPS while keeping p95 TTFB under your threshold

- Sustained throughput for large objects (app bundles, images, video segments)

- Peak absorption, meaning how performance changes during spikes

- HTTP/2 and HTTP/3 behavior (mobile networks can shift results)

Benchmarking only tiny files flatters everyone. Test the assets your users actually download.

Geography And ISP Coverage Metrics

This is where vendor “global” charts fall apart. Your users live on specific networks.

Slice your key metrics by:

- Country and city for your top markets

- ASN (ISP), especially mobile carriers

- Worst-market p95, not just best-market p50

To keep it honest, weight results by traffic share. A 10 percent slowdown in your biggest market matters more than a 30 percent gain where almost nobody visits.

CDN Analytics And Measurement Hygiene

Good CDN analytics means faster debugging and fewer blind spots. That is part of performance, because time-to-diagnose affects real downtime.

Check:

- Analytics delay (how soon logs and metrics appear)

- Granularity (per region, cache status, status code)

- Exportability (can you stream logs to your own tools)

- RUM support plus synthetic probes (real users plus controlled tests)

I like using both: synthetic for apples-to-apples comparisons, RUM for real-world truth.

CDN Ranking Scorecard

Instead of debating screenshots, score categories consistently.

Keep it fair: same URLs, same cache rules, same TTLs, same origin, same time window. Otherwise your CDN ranking becomes a ranking of configuration differences.