Table of contents

Detecting bots and bot traffic is crucial to maintaining the integrity and performance of your website. It involves identifying automated traffic that can skew your analytics, steal content, or perform malicious activities.

{{cool-component}}

Here's how you can detect bots and bot traffic on your website:

Analyzing Traffic Patterns

One of the first steps in bot traffic detection is to analyze your website’s traffic patterns. Bots often exhibit behaviors that are different from human users:

- Unusually High Traffic Spikes: Sudden surges in traffic, especially during odd hours, can indicate bot activity.

- High Bounce Rates: Bots might visit a page and leave immediately, leading to higher than normal bounce rates.

- Low Session Duration: Bots typically don’t spend much time on your site, resulting in very short session durations.

Monitoring User Behavior

Website monitoring tools can help track user behavior to distinguish between bots and human users:

- Mouse Movements and Clicks: Humans navigate websites in non-linear patterns, often moving the mouse around the screen and clicking in varied ways. Bots, however, may follow a more rigid, predictable pattern.

- Keystroke Patterns: Humans type with varying speed and pressure, while bots might input data at a consistent rate.

- Scroll Behavior: Natural scrolling behavior can be erratic and inconsistent, whereas bots might scroll in a linear and uniform manner.

IP Address Analysis

Bot detection techniques often involve scrutinizing IP addresses:

- Repeated Access from the Same IP: Multiple requests from the same IP address in a short period can be a sign of bot activity.

- IP Blacklisting: Using lists of known malicious IP addresses to block or monitor traffic coming from these sources.

- Geolocation Checks: Analyzing the geographic origin of your traffic. If you notice a lot of traffic from regions where you don’t usually have users, it could be bot traffic.

Analyzing HTTP Headers

HTTP headers can provide valuable clues for website bot detection:

- User-Agent Strings: Bots often have distinctive user-agent strings. Identifying unusual or known bot user-agent strings can help in detecting bots.

- Referrer Information: Checking where the traffic is coming from. Bots may come from unusual or non-existent referrers.

{{cool-component}}

Using CAPTCHAs

CAPTCHAs are a direct method to differentiate between bots and humans:

- Challenge-Response Tests: Requiring users to solve puzzles, recognize images, or type distorted text can block bots that can't pass these tests.

- Invisible CAPTCHAs: These don't interrupt user experience but can detect bots by monitoring user interactions.

Rate Limiting

Rate limiting involves controlling the number of requests a user can make in a given period:

- Request Thresholds: Setting thresholds for how many requests a user can make per minute or hour. Exceeding these limits can trigger further bot detection mechanisms.

- Dynamic Limits: Adjusting limits based on traffic patterns and user behavior to more accurately identify bots.

Machine Learning and AI

Modern website monitoring tools use machine learning and AI to detect bots:

- Behavioral Analysis: Using algorithms to learn what normal behavior looks like and identifying anomalies that indicate bot activity.

- Pattern Recognition: Detecting patterns in data that are consistent with known bot activities.

Honeypots

Honeypots are traps set up to detect bots:

- Invisible Links or Forms: Placing links or forms on your website that are invisible to human users but can be accessed by bots. Interaction with these elements can signal bot activity.

- Monitoring Access: Tracking any access to these honeypots and treating such traffic with suspicion.

Honeypots work by creating deceptive content that is not meant to be accessed by humans. For example, you might add hidden links or forms to your pages.

Bots that scrape your site will interact with these elements, revealing their presence. This data helps in identifying and blocking bot traffic.

Log File Analysis

Analyzing server logs can reveal bot activity:

- Frequency and Timing of Requests: Bots often generate requests at regular intervals or at very high frequencies.

- Request Types: Unusual request types or requests for non-existent pages can indicate bots.

Implementing Web Scraping Countermeasures

Bots often scrape websites to collect data:

- JavaScript Challenges: Requiring JavaScript to load can block simpler bots that don't execute JavaScript.

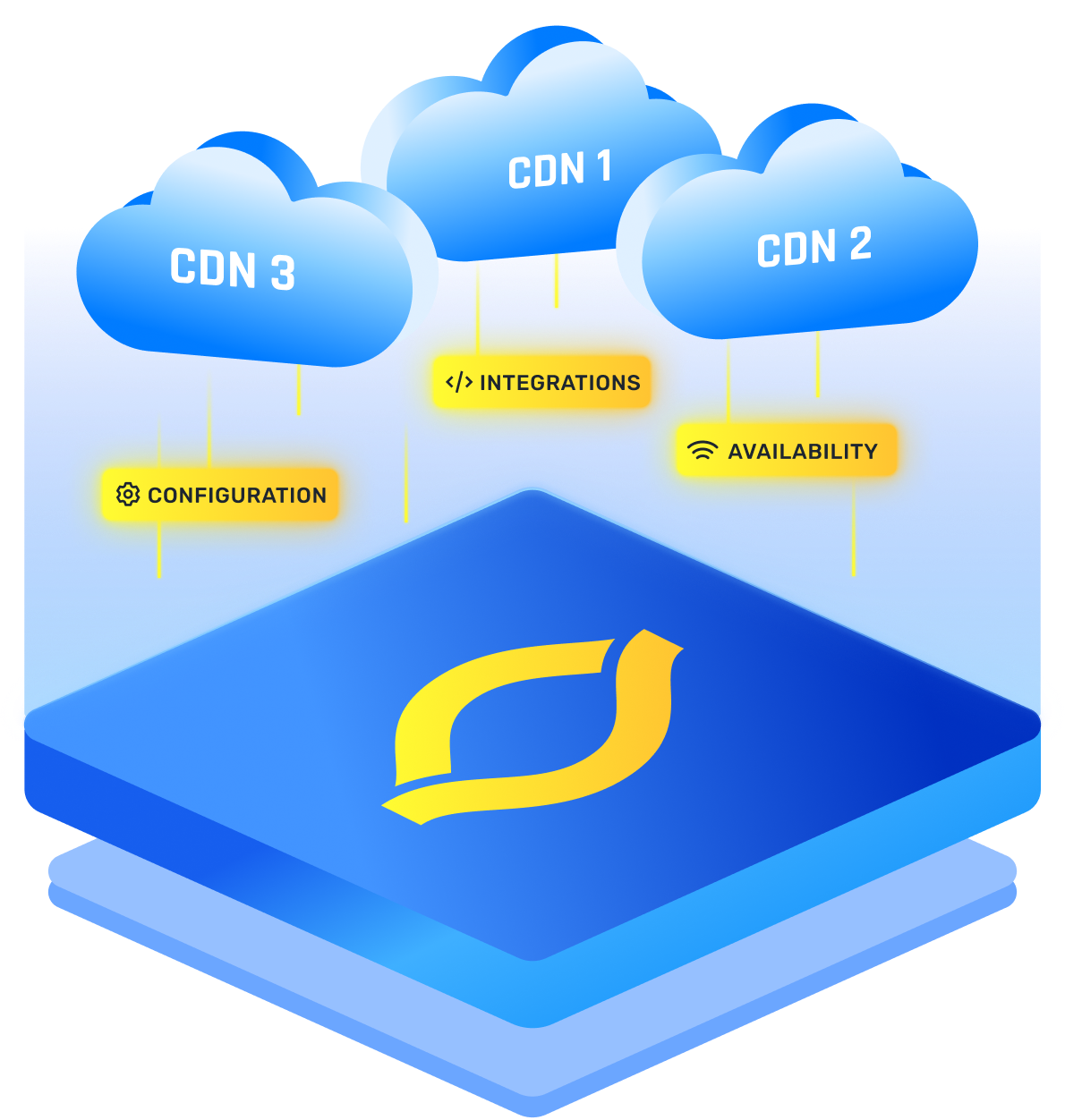

- Content Delivery Networks (CDNs): Using CDNs that offer bot protection features can help mitigate bot traffic.

Integrating Bot Detection Services

There are specialized services designed for bot traffic detection:

- Third-Party Services: Integrating third-party bot detection services can provide advanced detection and mitigation features.

- Regular Updates: These services often update their databases and algorithms to keep up with new bot techniques.

You can use these website monitoring tools to know the ins and outs of bot behavior.