Rate limiting, a dynamic technique employed to control the frequency of events in a given system often seems cumbersome and intimidating. But in reality, It is the hero on the front line, preventing potential breaches and securing data access at a granular level.

In this article, we will uncover the essence of Rate Limiting, explore its functioning, underscore its significance, and shed light on its implementation strategies, particularly in real-time across multiple Content Delivery Networks (CDNs).

What is Rate Limiting?

Rate Limiting, in the context of computer networks, is a security strategy used to limit the number of requests that a client can make to a server in a specified period of time.

It is akin to a bodyguard at a club entrance, controlling the inflow of guests to maintain a manageable crowd inside. The mechanics of Rate Limiting revolve around rules defined by the server.

These rules are called Rate Limiting algorithms, and they control the frequency of client requests based on various parameters like IP address, URL paths, or client user identities.

This protective measure helps to secure servers from distributed-denial-of-service (DDoS) attacks, brute force attacks, and other types of cyber threats.

{{cool-component}}

How Rate Limiting Works

Rate Limiting has a core – the Rate Limiting algorithm. This is a set of predefined rules used by a server to manage incoming client requests.

Here is how each of these work:

Fixed Window

This algorithm allocates a fixed number of requests for a set time period (e.g. 1,000 requests per hour).

Once this limit is reached, the server will reject any additional requests from the client.

Sliding Window Log

An advanced version of Fixed Window that uses a rolling time window to offer a fairer approach.

It upholds rate limiting, while simultaneously allowing for flexibility in the distribution of requests. This doesn’t imply that the distribution of requests is evenly spread out to prevent request bursts.

Rather the uniqueness of the Sliding Window Log is that it doesn’t restrict ‘X’ number of requests to a specific hourly window.

Scenario: Weather Forecasting Service API

Imagine "WeatherWise," an online weather forecasting service that offers detailed weather data to third-party developers through an API. This data is crucial for various applications like travel planning, agriculture, outdoor event management, and more.

Due to the high demand for accurate weather information, WeatherWise needs to ensure fair access to its API. It implements a Sliding Window Log algorithm to limit requests and provide equitable access to all users.

- Subscription Plan: Developers are offered a subscription plan that allows 100 requests within any 60-minute window.

- First 30 Minutes:

- 0-15 Minutes: A travel planning app makes 70 requests to fetch weather data for multiple locations.

- 16-30 Minutes: An agriculture app makes 30 requests to obtain weather forecasts for different farms.

- Total: 100 requests

- Next 30 Minutes:

- 31-45 Minutes: A third-party event management app tries to make requests but is denied, as the limit of 100 requests in the last 60 minutes has been reached.

- 46-60 Minutes: The window slides, considering only the last 60 minutes. As the requests from the first 15 minutes are no longer counted, new requests from other apps, such as a sports scheduling app, are allowed.

- Beyond 60 Minutes:

- Continuously Sliding: The window keeps sliding, considering only the last 60 minutes from the current time. This ensures that no single app can monopolize access by timing their requests at the beginning and end of fixed hourly windows.

Core Rate-Limiting Algorithms

Most production systems combine several approaches so that each algorithm picks up where another leaves off.

Below are the three foundational techniques you will see again and again in API gateways, service-mesh sidecars, and CDN edge code:

Choosing between them: Token Bucket for burst-friendly APIs, Leaky Bucket for ultra-smooth traffic to legacy services, and GCRA when you need both efficiency and mathematical rigor across a large keyset.

Token Bucket

Think of it as: A water cooler that refills at a steady drip but can serve multiple cups at once.

Leaky Bucket (Fixed-Rate Queue)

Think of it as: A funnel with a pin-hole, where the water may surge in, but it drips out at a constant pace.

Generic Cell Rate Algorithm (GCRA)

Think of it as: An appointment scheduler that stamps the earliest legal time for the next visit.

Adaptive (Dynamic) Rate Limiting

Static ceilings are fine; until a viral campaign, sudden DDoS, or overnight cost spike blows past your worst-case math.

Adaptive Rate Limiting (ARL) solves this by letting the limit itself move in real time.

Here’s how it works:

- Telemetry loop – Collect live metrics such as CPU%, error-rate, tail-latency, and customer tier.

- Policy engine – Evaluate rules or ML models that translate telemetry into a target rate.

- Enforcement layer – Push new token-bucket or GCRA parameters to edge nodes or sidecars every N seconds.

Example: Live-Sports Streaming API

Scenario: A football final begins at 18:00 UTC. Concurrent viewers jump 8× in 90 seconds.

Response with ARL:

- Pre-kickoff warm-up – Policy engine sees rising rps but healthy margins, nudges up bucket B so the mobile apps can pre-fetch.

- Halftime surge – Error rate climbs; engine tells edges to cut rate for anonymous users by 40 %. Premium subscribers remain unaffected.

- Post-match cool-down – Metrics normalize; limits slowly revert via exponential decay to default.

Single-machine System Vs. Distributed System

Now, let’s get into the technical challenges of implementing Rate Limiting in a distributed system like a CDN as compared to a single-machine system:

- Single-machine System: The number of requests from a client is restricted based on identifiers like IP address or API key, within a specific time window. However, as the scale of users or services amplifies, so does the necessity for advanced Rate Limiting mechanisms to cope with the increasing complexity.

- Distributed System (CDN): Managing systems is akin to directing a well-choreographed dance where each node must be in sync, capable of growing, quick in response, consistent in data, and resilient in the face of adversities. It’s a balancing ace, but when performed correctly, can lead to a seamless and efficient system.

Example 1: E-Commerce Platform (Single-Machine System)

Imagine an e-commerce platform, "ShopEase," operating on a single server. It offers a wide range of products, and its API is consumed by various third-party applications, including price comparison sites, recommendation engines, and mobile apps.

- Identification: Requests are restricted based on IP address or API key, with limits like 200 requests per minute.

- Scalability Issues: As the user base grows, so does the complexity. The server might struggle to handle the increase in requests.

- Solution: Implementing advanced Rate Limiting mechanisms and potentially moving to a distributed system to handle the growing demand.

Example 2: Global News Portal (Distributed System - CDN)

Now consider "NewsHub," a global news portal that serves content to millions of users worldwide. To ensure low latency and high availability, NewsHub uses a CDN with servers located in various regions.

- Coordination: Directing the nodes in the CDN is like orchestrating a dance; each server must be in sync.

- Scaling: The CDN must be capable of growing, responding quickly, maintaining consistency, and being resilient.

- Complex Rate Limiting: Implementing Rate Limiting in a CDN requires a global perspective, tracking requests across all nodes.

- Solution: Using a distributed algorithm like Global Rate Limiting that considers the total requests across all nodes.

{{cool-component}}

Rate Limiting Importance

Rate Limiting assists in API security, protecting APIs from being exploited or misused. When applied at an IP level, IP Rate Limiting can prevent a single user or client from monopolizing resources, ensuring fair usage and availability for all clients.

It also acts as a preventive measure against web scrapers or bots that attempt to extract valuable information from a website at high speed. Slowing the request rate down results in a more complicated and discouraged scheme for such activities.

Consider a scalper-programmed bot trying to purchase all the tickets to a festival. With the correct rate-limiting restrictions set up, not only will its attempts be futile, but you will also be able to distribute tickets to a wider audience, since your server won’t be stuck processing a boatload of bot requests.

Testing Rate Limiting

Testing Rate Limiting helps ensure that your setup works as intended and protects your systems as expected.

There are numerous tools and techniques available to test rate limiting. Load testing tools such as Apache JMeter, Gatling, or Locust can simulate a high number of requests to your server.

Using these tools, you can observe how your system responds when the request limit is reached. Are additional requests appropriately rejected? Does the system remain stable under the load? Answers to these questions confirm the efficiency of your Rate Limiting setup.

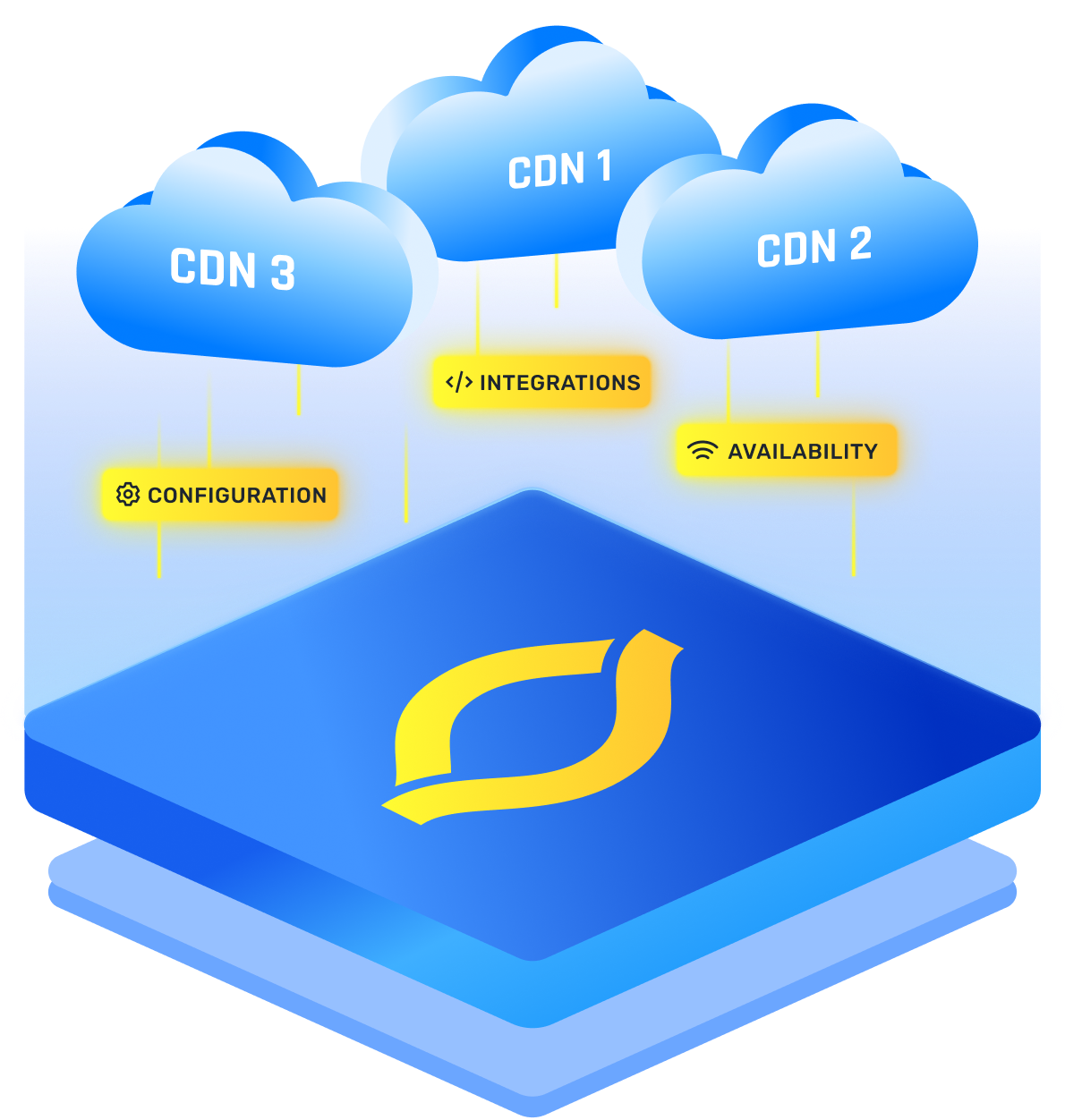

Implementation for Rate Limiting Cross Multiple CDN

Implementing Rate Limiting across multiple CDNs presents a unique challenge, and two main strategies have emerged to tackle it.

- Extra Tier with a Security Service: Here, a third-party security service like Imperva is installed as an extra tier. The traffic flows from the CDN to the origin server via this security service, adding an extra layer of security but at the cost of increased latency.

- CDN Providers: Under this method, Rate Limiting is consumed from the CDN providers. However, this approach isn’t as effective and may increase the potential for breaches since CDNs are blind to each other and can’t see the entire traffic picture.

Conclusion

In essence, Rate Limiting is a proficient strategy to regulate data flow and thwart potential attacks. With its intricate Rate Limiting algorithms and flexible implementation strategies across multiple CDNs, Rate Limiting assures optimal performance while maintaining impenetrable security.

FAQs

1. How does rate limiting differ from other forms of traffic control or throttling?

Traditional traffic-shaping tools prioritize or delay packets; rate limiting enforces a hard ceiling on request counts within a window. By explicitly limiting rate at the application or edge layer, a rate limiter returns a 429 status instead of silently buffering, so critical resources stay predictable and protected.

2. How does global rate limiting work across multiple servers or CDNs?

A global rate limiter coordinates counters through shared storage (Redis, Consul, or proprietary edge signals). Each server checks the central quota before accepting a request, ensuring no point-of-presence can be abused. In multi-CDN setups, token replication or synchronized leaky buckets keep the aggregate rate limited consistently worldwide.

3. How does rate limiting protect against DDoS attacks, brute-force attempts, and API abuse?

During a DDoS flood or credential-stuffing storm, the rate limiter detects when the permitted threshold is crossed and immediately flags a rate limit exceeded condition (HTTP 429). Offending IPs or API keys are delayed or blocked, preventing resource exhaustion while genuine users continue unhindered, turning brute-force volume into harmless noise.

4. How can rate limiting improve the stability and scalability of web applications and APIs?

By smoothing bursts and shielding back-end databases, rate limiting transforms unpredictable peaks into manageable flows. Because every client is rate limited to an agreed budget, thread pools, caches, and connection pools reach equilibrium instead of cascading under load. This predictability lets teams scale horizontally with smaller, cost-efficient nodes.