The ever-growing internet is supported by multiple data transfer mechanisms. Among these mechanisms, one stands out as a key player, especially in scenarios where the precise size of transmitted data is unpredictable. This method, integral to the Hypertext Transfer Protocol (HTTP) version 1.1, has revolutionized the way data is sent over the internet.

This guide explores HTTP Chunked Encoding, how it fundamentally changes the data transfer process, and why it's so important in modern web communications.

What is HTTP Chunked Encoding?

HTTP Chunked Encoding is a streaming data transfer mechanism, but what sets it apart is its approach to managing data flow. Instead of sending a complete data set in one go.

It breaks down the data stream into a series of non-overlapping segments, aptly termed "chunks." These chunks are distinct units of data, each self-contained and sent independently of one another. This segmentation allows for a continuous data flow without the need for the sending party to determine the entire data size beforehand.

It's particularly useful in situations where the total size of the response is unknown or cannot be predetermined. For instance, in the case of a large file or a dynamically generated content stream, the total size might not be clear at the start of the transmission. HTTP Chunked Encoding elegantly solves this problem by transmitting the data in manageable chunks.

{{cool-component}}

Anatomy of a Chunk

Each chunk is sent with its own size header, which tells the receiver how much data to expect in that chunk. The receiver processes these chunks sequentially, assembling the complete data set as it arrives. This method not only ensures efficient data transfer but also enhances the responsiveness of web applications. By sending data as it becomes available, chunked encoding allows for faster start times in data processing and rendering, improving the overall user experience.

The independence of each chunk in this encoding method also adds a layer of reliability to data transfer. If an error occurs in one chunk, it does not necessarily compromise the entire data stream. This robustness makes HTTP Chunked Encoding a preferred choice for streaming large amounts of data over the unpredictable nature of internet connections.

The Mechanics of Chunked Encoding

It’s amazing how HTTP Chunked Encoding optimizes data transfer, especially in situations where the total size of the response is uncertain until the request is fully processed.

The process unfolds in a series of well-defined steps, each crucial for the seamless transmission of data:

1. Initiating the Transfer

When a server prepares to send data to a client, especially large or dynamically generated content, it often cannot determine the total size of the response in advance.

This is where chunked encoding becomes particularly useful. For instance, consider a scenario where a server is generating a large HTML table based on a complex database query, or transmitting a large image that is being processed.

In these cases, the server opts to use chunked encoding to manage the data transfer efficiently.

2. Sending Data in Chunks

To indicate that the data is being sent in chunks, the server includes a Transfer-Encoding: chunked header in its response. This header is a clear signal to the client that the data will be received in a series of chunks rather than a single block.

The data is broken down into smaller, manageable 'chunks'. Each chunk consists of two parts: a header and the actual data. The header is a hexadecimal number that indicates the size of the chunk in bytes, followed by a carriage return and a line feed.

The data that follows this header is exactly the size specified in the header. After the data, another carriage return and line feed signify the end of the chunk.

3. Marking the End of Data

The end of the data stream is marked by a final chunk of zero size. This chunk is followed by an optional trailer of additional headers and a final carriage return and line feed, signaling the end of the response.

4. Client-Side Processing

On the client side, as each chunk is received, its size is read first, which tells the client how much data to expect in that chunk.

The client then reads the data of that chunk, processes it, and then moves on to the next chunk. This continues until the zero-size chunk is received, which indicates that there are no more chunks.

HTTP Chunked Encoding Example in Action

Here’s how chunked encoding looks in a real HTTP response:

HTTP/1.1 200 OK

Transfer-Encoding: chunked

5

Hello

6

World

C

This is a test

0

Breaking it Down:

- Header:

- Transfer-Encoding: chunked tells the client to expect chunked data instead of a full response.

- Chunks:

- 5\r\nHello\r\n → The first chunk contains 5 bytes (Hello).

- 6\r\n World\r\n → The second chunk contains 6 bytes ( World).

- C\r\n This is a test\r\n → The third chunk contains 12 bytes ( This is a test).

- End of Data:

- 0\r\n → A zero-size chunk marks the end of transmission.

Client Processing:

- The browser reads each chunk sequentially and starts rendering immediately.

- Once the zero-size chunk is received, the client knows the response is complete.

This method allows streaming even when the total response size is unknown at the start.

Differences Between HTTP/1.1 Chunked Encoding and HTTP/2 Data Frames

HTTP/1.1 chunked encoding and HTTP/2 data frames both handle streaming data, but they work differently. Here’s how they compare:

Best Practices for Implementing Chunked Encoding

HTTP Chunked Encoding is non-negotiable in ensuring smooth and efficient data transfer, particularly in web applications dealing with large or dynamic data sets.

To achieve this, several best practices should be followed, focusing on accuracy, thorough testing, and proper handling of the encoding process.

1. Ensuring Accurate Chunk Sizes

The foundation of chunked encoding lies in correctly sized chunks. Each chunk must be accurately measured and declared. The size of each chunk, indicated in hexadecimal format in the chunk header, should precisely match the length of the data it precedes.

This accuracy is important because any discrepancy between the declared size and the actual data can lead to transmission errors, causing the client to misinterpret the end of a chunk or the start of a new one. Accurate chunk sizing ensures a seamless assembly of the complete data set on the client side.

2. Correct Implementation of Transfer-Encoding: chunked Header

Implementing the Transfer-Encoding: chunked header correctly is fundamental. This header must be included in the response when chunked encoding is used. It informs the client that the data will be received in chunks, allowing the client to process the response appropriately.

Failure to include this header or incorrect implementation can lead to confusion in the client, potentially causing it to expect a single block of data, thereby disrupting the entire communication process.

3. Thorough Testing Across Different Chunk Sizes

Rigorous testing is essential, especially in scenarios where various sizes of chunks are transmitted. Different sizes can behave differently in terms of performance and compatibility with different clients and network conditions.

Testing with a wide range of chunk sizes helps identify the optimal chunk size for specific scenarios and ensures compatibility across different client implementations and network environments. This testing should also consider edge cases, such as very small or very large chunks, to ensure robustness under all conditions.

4. Handling Chunked Encoding Correctly to Prevent Errors

Proper handling of chunked encoding, both on the server and client sides, is vital to prevent errors. This includes correctly parsing the chunk headers, accurately processing the chunk sizes, and ensuring that the end of each chunk and the final zero-size chunk are correctly identified.

When dealing with large objects, such as graphics images or other types of binary data, streaming should be supported to optimize uploading and downloading of these resources.

Special attention should be given to error handling, ensuring that any issues in chunk transmission or reception are gracefully managed to prevent disruption of the entire data stream.

Why Use Chunked Encoding Instead of Content-Length?

Normally, HTTP responses include a Content-Length header to tell the client how much data to expect. But what happens if the total size isn’t known?

- Dynamically Generated Content

- APIs and web servers often generate data on the fly (e.g., real-time logs, live chat messages).

- Chunked encoding allows sending partial data without waiting for the full response to be ready.

- Large File Transfers & Streaming

- Sending large files (like videos, PDFs) without buffering everything in memory first.

- Allows the client to start processing the data immediately, improving user experience.

- Improving Web Performance

- Enables progressive rendering in web browsers—content appears as soon as it's available rather than waiting for the entire response.

- Especially useful for long-running database queries or complex HTML generation.

- Avoiding Memory Issues

- Without chunked encoding, the server must store the entire response before sending it.

- Chunking reduces memory usage by sending data in pieces.

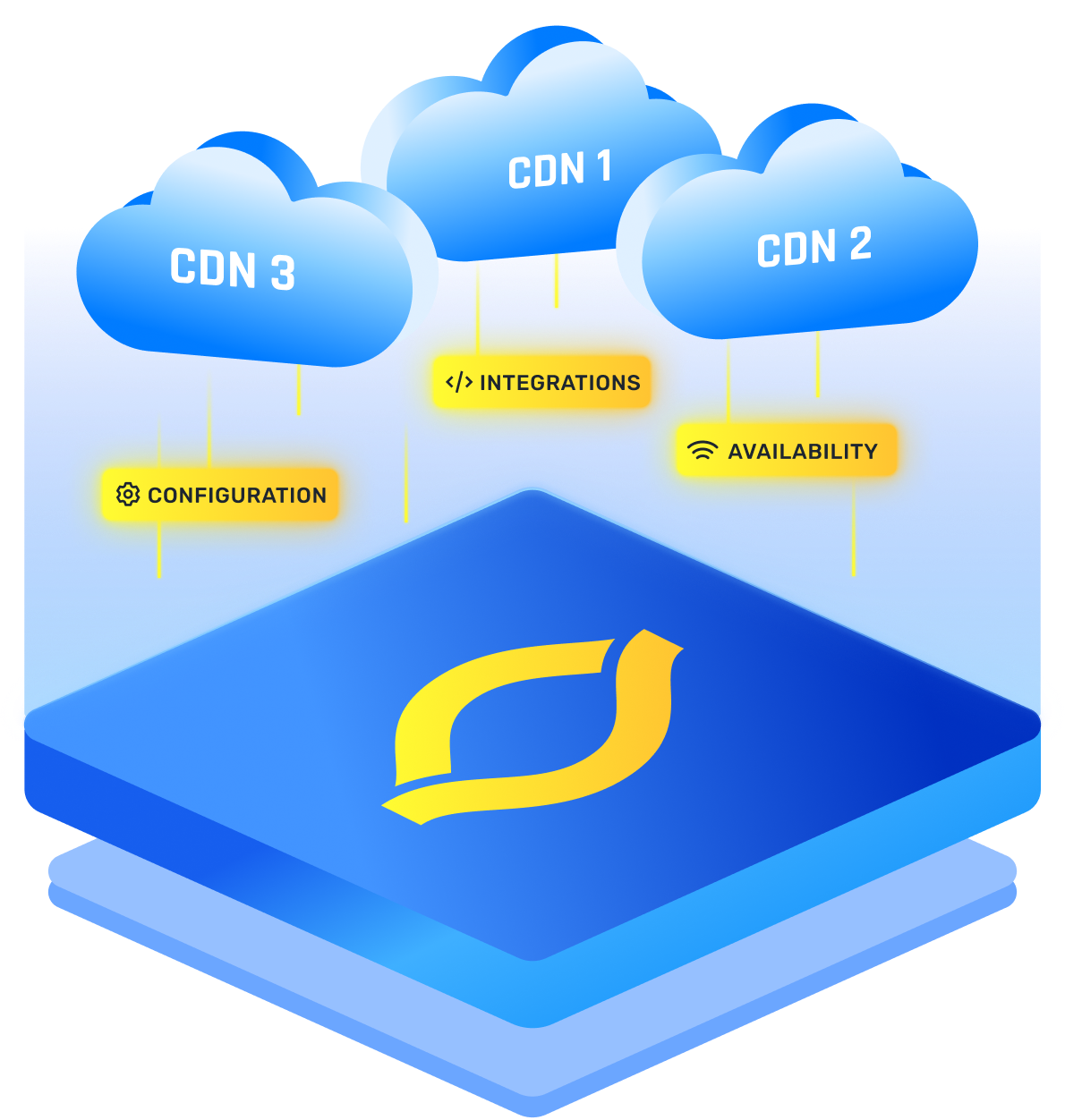

- Supporting HTTP Proxies & Load Balancers

- Some proxies require immediate data delivery and can’t buffer entire responses.

- Chunked encoding ensures smooth operation with reverse proxies, CDNs, and caching servers.

When NOT to Use Chunked Encoding?

- If the total response size is already known (e.g., sending a 2MB image), Content-Length is more efficient.

- Some legacy clients and HTTP parsers may not support chunked encoding properly.

Conclusion

In essence, HTTP Chunked Encoding breaks down the conventional approach of sending a complete data set in one go, instead opting for a segmented transfer of data in the form of chunks. The anatomy of each chunk, with its own size header and sequential processing, not only ensures an efficient transfer but also enhances the responsiveness and reliability of web applications.

FAQs

1. How Does HTTP Chunked Transfer Encoding Work?

Chunked encoding breaks HTTP responses into chunks of data, each with its own size header. The server streams chunks to the client instead of sending everything at once. This improves performance for large or dynamically generated content, allowing users to see partial results immediately.

2. When Should I Use HTTP Chunked Encoding?

Use chunked encoding when the total size of a response is unknown or large. It’s ideal for streaming APIs, real-time applications, large file transfers, and progressive web rendering. It also reduces memory usage on the server since data is sent as it’s generated.

3. What Are the Limitations of HTTP Chunked Encoding?

- Not all clients support chunked encoding (older browsers, some HTTP proxies).

- Chunk metadata increases overhead, making it less efficient for small static files.

- More prone to HTTP request smuggling attacks if not properly implemented.

- Doesn’t encrypt data—use HTTPS for secure transfers.

.png)

.png)

.png)